One of the most unexpected announcements of last year’s Oculus Connect was built-in headphones on the Crescent Bay prototype and a partnership with Visisonics to provide full 3D audio to Oculus experiences. As CEO Brendan Iribe explained, “Audio is an essential ingredient for immersive virtual reality.”

Oculus wants to ensure that developers focus just as much on the audio portion of virtual reality experiences as the visual. They doubled down on audio by releasing an Audio SDK last April. Now, at Oculus Connect 2 we get to see how far they’ve come.

Syncing Audio and Visuals in VR

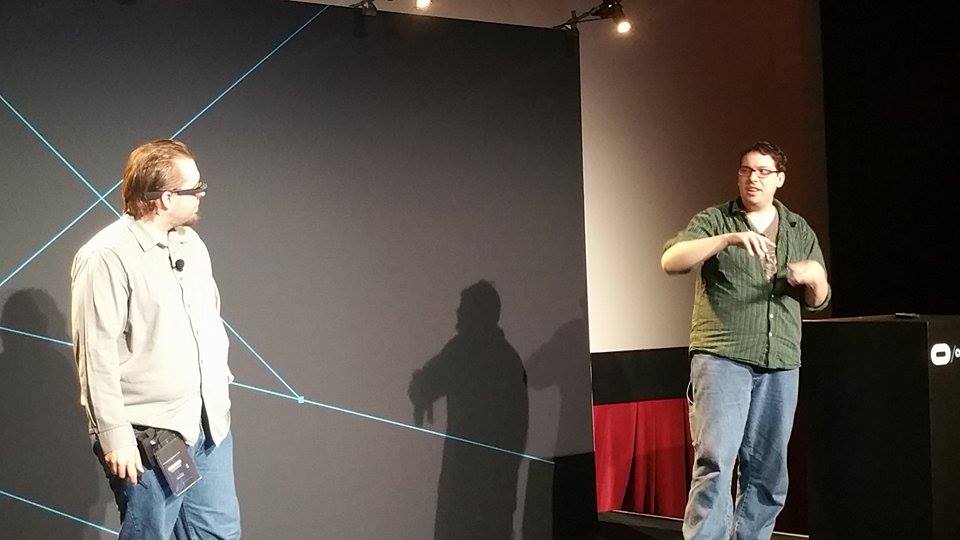

The first audio talk of the conference was “Hearing with Our Eyes: Interactive Music for VR” by Justin Moravetz and Jake Kaufman of ZeroTransform, the team behind Vanguard V, Proton Pulse, and the upcoming NUREN. This was a fascinating exposure to how closely audio and visuals can be linked in VR experiences.

They broke their talk into three different ways that music and virtual reality can interact based on the type of experiences they have built. For Proton Pulse, they synced the scene to the music so that every pulse and scroll in the game is linked to a different tone. This allowed the music to trigger different events in the scene, making the experience feel more natural and therefore more immersive.

In Vanguard V they needed a more cinematic feel. As Kaufman explained, it needed to feel like “late 70s giant robot movies.” As the game is on a fixed track through an asteroid field, they were still able to sync up the music to the game.

They have taken music syncing even further in their latest VR experience: NUREN. In this experience, an entire city is pulsing and changing to the music. The crazy thing is that every animation you see, every AI event, every light effect, is all triggered by the music itself. Snares trigger giant particle blasts while the bass affects the pulse of the city. It’s an entirely dynamic experience created around music.

When you listen to Kaufman and Moravetz, you begin to understand why they are so focused on close audio integration in their games. As Moravetz said, music is the “most effective way to reach people emotionally.” Audio affects us on a subconscious level, making any VR experience feel more real and engaging. Moravetz continued, saying that the audio “sets up the mood, sets up what’s coming…there’s so much you can do to connect with people.” By linking audio and visual so closely in their games, they have created a subtle way to draw people into their VR experiences.

3D Audio Tips and Tricks

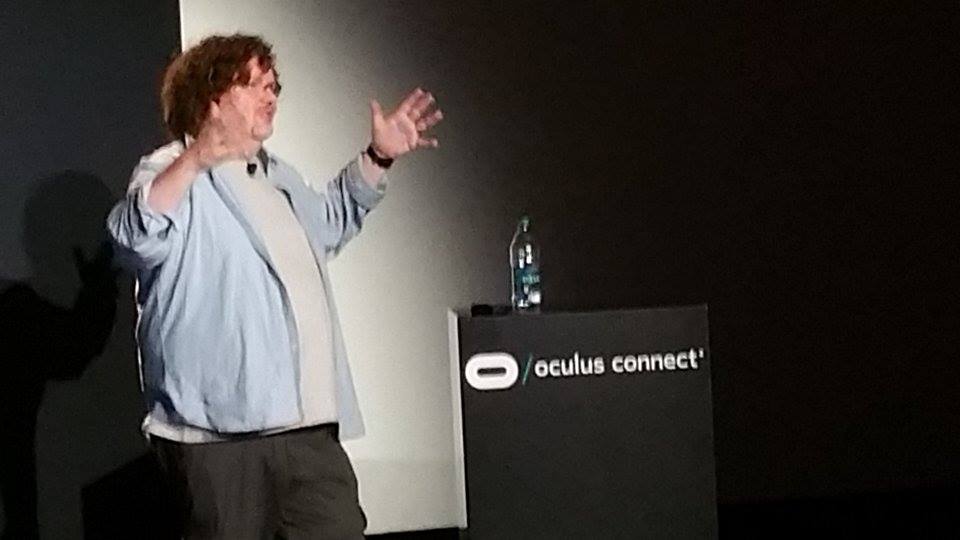

It’s one thing to draw people into a VR experience with dynamic audio. It’s a whole other problem to make them feel like they are really there. That’s what Tom Smurdon, the Audio Content Lead at Oculus, discussed in his talk “3D Audio: Designing Sounds for VR.”

The most important aspect of audio in VR as Smurdon explained is that “when you’re in that space, you feel like you’re in that space.” Every sound must have a source, whether it’s coming from the environment, a creature, or another person.

He then explored a number of different ways 3D audio can be used and manipulated to increase immersion. Not only should sounds come from the creature or person that is creating them, it should come from the part of the person creating it, whether it’s a footfall or a scream. He even went so far as to explain that for a T-Rex roar, the high frequency sounds should come from the mouth while low frequencies should come from the T-Rex belly.

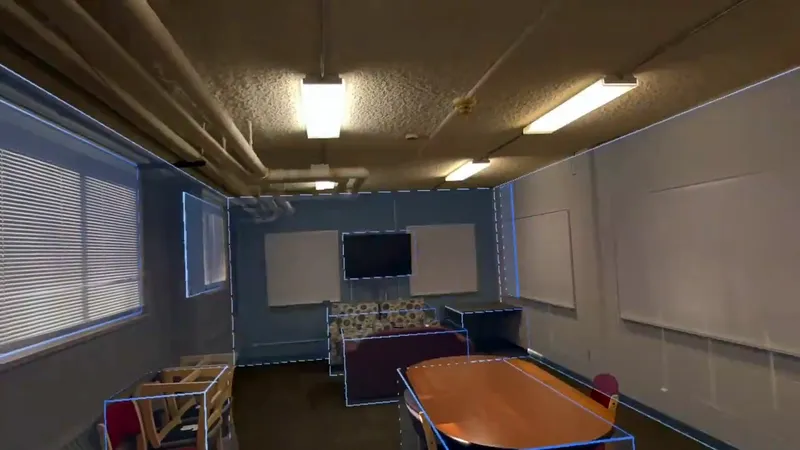

For ambient noises, he went into depth about how the noise should still come from the environment as opposed to standard stereo audio. Emitters should be placed around the user in all directions. Height becomes incredibly important too – a big difference from traditional surround sound which only takes place on one plain. Ambient noises that are placed above or below make the environment sound like it is above or below the player.

A key aspect for truly immersive experiences is to have subtle sounds coming from the environment at all times. These should still be spatialized with a specific location, but these background noises help the user feel like they are in a real place. Still, Smurdon makes it clear that developers should avoid audio mixes that are overly busy: “Focus needs to be where focus should be.”

Smurdon finished his talk with an overview of Oculus’ latest audio SDK, 0.11. This seems like an absolutely fantastic tool for helping developers integrate 3D audio into their experience. It’s a free plugin for Unity, FMOD, and Wwise. It also works with AAX and VST as a DAW spatializer, allowing for easy testing and development of 3D audio sounds.

There were all sorts of bits of wisdom in this talk about how to use 3D audio. Slow builds don’t work as audio cues. Voiceovers and music should still come from an audio source, even if it’s as simple as invisible emitters over the user’s head. When you have visuals to back up a sound, it makes the experience even more immersive. But the big thing he made clear is to test constantly. “Watch people, see what they’re looking at and paying attention to,” he explained. Why? Because, as Smurdon exclaimed near the end of his talk, “There’s no standard for any of this!”

A common refrain among VR audio experts is that audio is fifty percent of presence. Moravetz, Kaufman, and Smurdon all made it clear how much good audio can bring people into an experience and make them feel like they are really there. If developers effectively use Oculus’ 3D audio plugin and sync it with their experiences, they can create VR audio that truly resonates with people.