Another day, another fascinatingly futuristic project with Elon Musk’s name attached to it. But we’re not talking about SpaceX or the Hyper Tube this time, we’re talking about teaching robots to do tasks with VR.

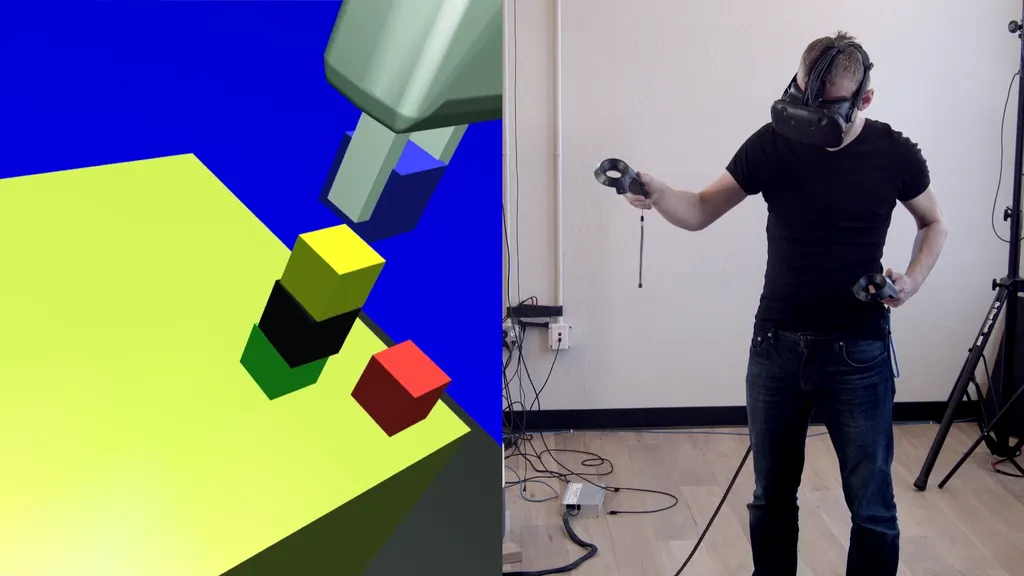

OpenAI, Musk’s artificial intelligence research company, this week revealed a look at its new system that teaches robots how to perform actions using the HTC Vive. A human puts on the Vive and, with the position-tracked controls, acts out the task they want the robot to carry out, in this case grabbing and stacking boxes. Recording that data, the robot learns what it’s meant to do and imitates the process in real life.

We’ve created a robotics system, trained entirely in simulation and deployed on a physical robot, which can learn a new task after seeing a human do it in VR once.

Details: https://blog.openai.com/robots-that-learn/

Posted by OpenAI on Tuesday, 16 May 2017

Check out the video above to see it in action. The robot is able to replicate a range of actions with the human acting them out first, much in the same way a parent might teach their child how to do something. That’s a little scary, when you think about it.

The robot has a camera with which it can see the environment. Two neural networks together to get the arm to move in correlation to what the robot is seeing. The company describes the system as an early prototype that lays the foundations for much of the work it will do in the future.

It’s not hard to think of simple tasks these robots could carry out even with this basic implementation, but the question is what types of more complex actions will OpenAI be able to unlock in the future with the help of VR? Block stacking is just the start.