A U.S. company called Eyefluence announced yesterday at a private event it is actively working to bring eye tracking technology to the “next generation of VR headsets.”

–

Last night I was invited to a press-only evening showcase for a technology that I have come to believe will be truly instrumental in both virtual and augmented reality’s eventual rise to dominance. I’m not talking about a haptic feedback suit that turns your arms into video game controllers. I’m not talking about a treadmill that is attempting to solve the VR locomotion problem. I’m talking about a system that turns the smallest movements of your eyes into an interface with the potential to rival the iPhone’s touch screen. I’m talking about Eyefluence.

“In 1998 a doctor by the name of William Torch met a patient who was ‘locked in,” Jim Marggraff says to the small group of journalists gathered in the dimly lit restaurant. “So he went to Radio Shack, bought some components and built that patient a system by which to communicate with the outside world.”

Being “locked in” in this case means that the patient was completely robbed of all mobility from the top of his head to the tips of his toes. The only parts of the body that could be controlled were his eyes. And so, Dr. Torch built an eye tracking system capable of seeing these infinitesimal motions to control a cursor and spell out various words.

Marggraff met Dr. Torch in 2012 after turning a passion for children’s education into a hot selling electronic teaching tool called the Leap Pad. He was floored by the technology, but as impressed as he was he also felt a glimmer in the back of his mind telling him one thing: we could probably make this even better.

In 2013 Marggraff connected with a celebrated medical device wizard named David Stiehr. Stiehr is a serial entrepreneur who founded a company that inserted small coils into the lungs and another that shipped orthopedic devices by the truckload to athletes around the world.

Together, the two founded Eyefluence in 2013. The goal of the company, Marggraff said, was to do one thing: create an eye tracking system that measures the intent of your mind, not just the motions of your eyes, in order to control technology.

What this means in practice is that Marggraff, Stiehr, and their team were determined to eschew the two most commonly used control mechanics in the eye tracking world: blinking and dwelling. Blink controls enable you to make selections by gazing at a certain digital icon or reticle and, of course, blinking. Dwelling uses a similar system but requires you to hold your gaze, or dwell, on those icons in order to make a selection.

Marggraff and Stiehr felt these input methods were exceedingly slow and unnatural. They believed that they could dramatically speed up the rate at which disabled people could communicate, and they also felt that they could build a system that allows a user to “do everything they can with a finger on a smartphone, but with the smallest motions of their eyes.”

I got to go hands on with the system that Eyefluence calls “iUi” and I have to say it works exceedingly well. The exact details of the user interface are not being released by the company but here is what I can tell you: I was able to navigate complicated menus, keyboards, and galleries at incredible speeds using only my eyes and without ever having to blink or dwell on anything.

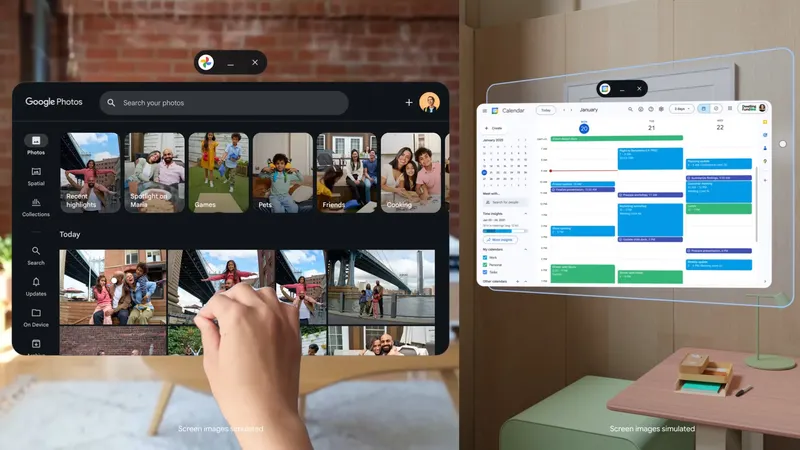

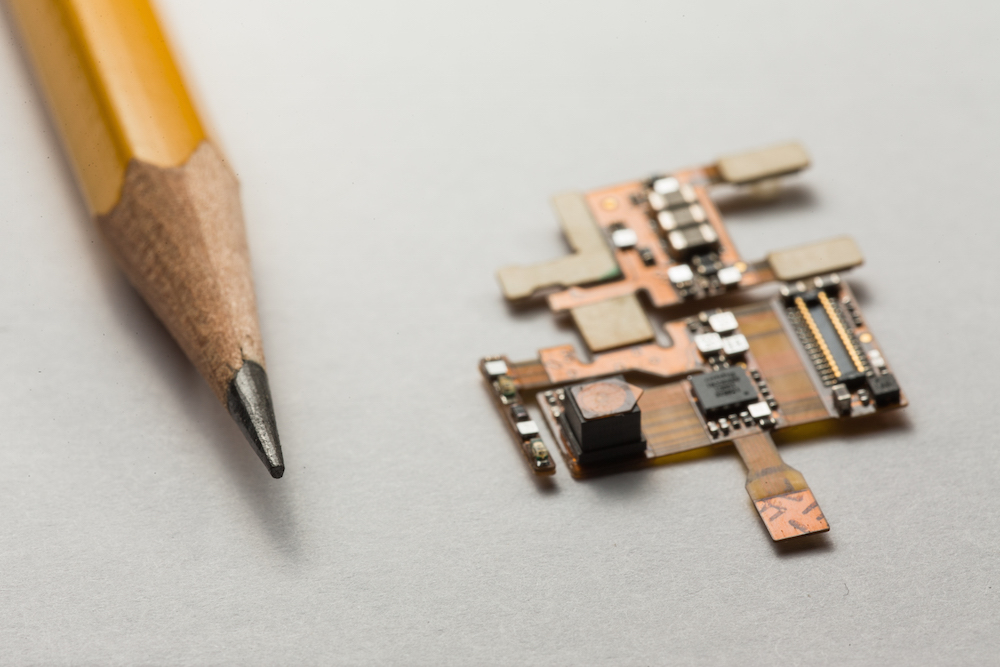

Suffice it to say, Eyefluence has indeed come up with a system that could become the new standard for operation in things like virtual reality and augmented reality headsets. The demos I saw were running on a modified HTC Vive and a pair of ODG AR Glasses. Both headsets were retrofitted with tiny infrared LED lights that are used to create a digital image of your eye. These lights communicate with Eyefluence’s custom hardware and software to create the system I saw in action.

The retrofitted system worked very well. Marggraff and Stiehr made it abundantly clear throughout the night that after market solutions are not a part of their business plan going forward. Marggraff revealed during his opening remarks that Eyefluence is “actively working with notable VR and AR partners in order to integrate our technology directly into their hardware.”

When asked if he could mention specifically who these partners were Stiehr stated, “they are names you would instantly recognize from the VR world.” Furthermore, Stiehr also confirmed Eyefluence met directly with Palmer Luckey at Oculus and top engineers at Valve.

I also asked if these are deals that Eyefluence is hoping to get, or if the duo are referring to actual inked contracts leading to confirmed eye tracking in next generation VR headsets Marggraff said to “watch the space” and keep an eye out for an official announcement “later this year.”

Eye tracking in VR headsets looks like it is heading from niche idea to apparent necessity as the heavy hitters at Oculus and HTC continue to work on the mysterious second generation of consumer headsets. In past interviews Eyefluence competitors like SMI, which recently showed a new foveated rendering technique, have echoed this idea of inevitable adoption of eye-tracking technology in VR headsets. Stiehr mentioned Eyefluence can be used for foveated rendering as well to “reduce computing loads by as much as 60 percent.”

These are some of the strongest indications we’ve yet seen that a company is actively working to bring eye tracking into VR. If Eyefluence is indeed bringing their iUi system to major hardware like the Oculus Rift or HTC Vive’s second iterations, then all I can say is that would be a very welcome addition to a world currently dominated by headset touchpads and Xbox controllers.

–

Eyefluence has raised over $7 million in venture funding from major players like Motorola and Dolby. The company also recently brought in a Series B funding round for an undisclosed amount. The company is currently made up of 31 employees including its two co-founders. For more news on this group keep checking UploadVR for updates.