When we last heard from OTOY, the company revealed it was bringing light field baking and more to its Unity integration. At GDC today, OTOY is announcing a heck of a lot more.

Company CEO Jules Urbach is here to run through a list of updates in the video below but you best prepare yourself: there’s a lot. On display at Unity’s booth is the first light field video created in OTOY’s Octane renderer using the engine. According to the company this is both a “video light field movie” and also a “huge game level size navigable volume”. That essentially means you can move through the photorealistic space.

The piece uses the stereo cube map render shown back at the most recent Unite Conference. It apparently streams without the need for buffering.

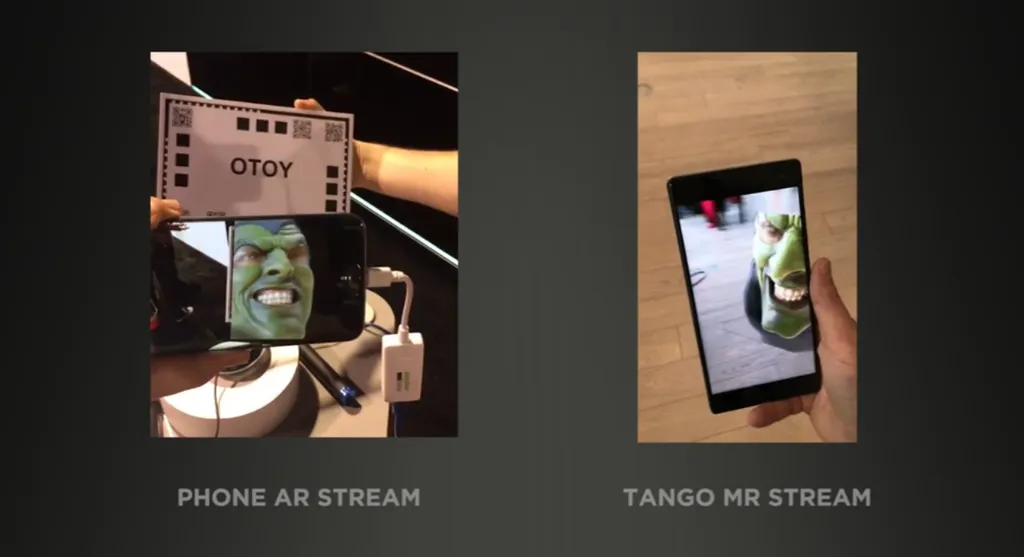

Perhaps even more exciting is a demonstration of the company’s ORBX Media Player (OMP) running on Google’s Tango 3D capture system. This brings the company’s light field streaming into mixed reality, allowing you to view realistic-looking objects in the real world through a phone’s screen.

OTOY is also working with ODG to incorporate the tech into its AR smartglasses once they’re capable of 6 degrees of freedom (6DOF) movement. The stereo polarized cameras being built into the company’s glasses are also going to support full light stage capture with OTOY’s help, using OMP to process frames. Items captured could be turned into a Unity asset to be brought into VR or MR experiences.

Finally, moving back to VR, Unity and OTOY are showcasing a preview of Samsung’s VR Internet Browser app for Gear VR with native ORBX file and URL playback support. This means you’ll be able to immerse yourself in realistic environments as you browse the web. New samples of this content have been posted to the web too, showcasing “experimental support for exporting and embedding interactive Unity apps inside of an ORBX media file” bringing navigable UX, screens and dynamic objects into them. The company says this is currently working on mobile.