Google today revealed it is expanding the preview for its Lens service, bringing the AI-driven image recognition system to all Google Photos English-language users on both Android and iOS in the coming weeks. The service is also coming to Google Assistant, and engineers at the company are continually training the system to recognize more things.

According to a blog post from Google:

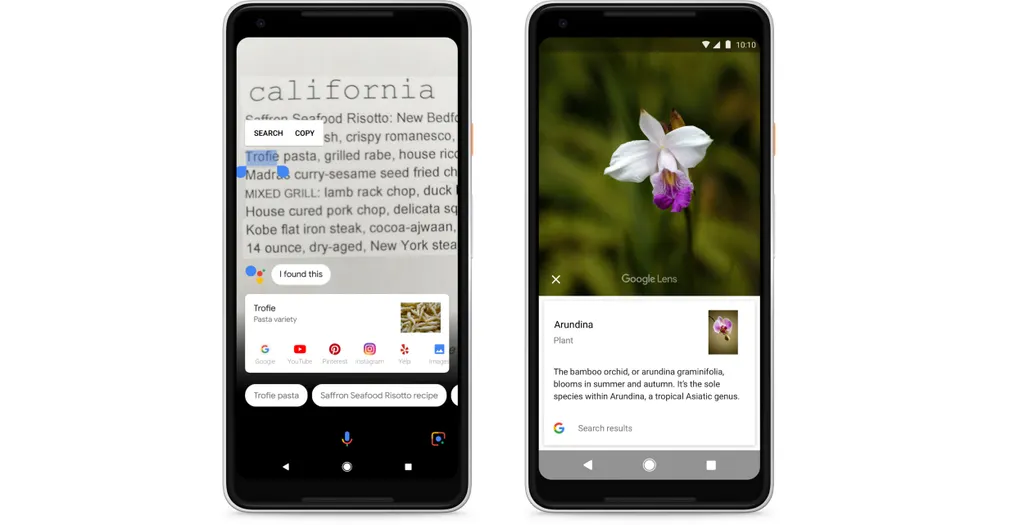

We’ve added text selection features, the ability to create contacts and events from a photo in one tap, and—in the coming weeks—improved support for recognizing common animals and plants, like different dog breeds and flowers.

Google also released its ARCore system from its preview status, moving to version 1.0. This should open the floodgates so more developers can bring higher quality AR apps to a number of high-end Android phones including: Google’s Pixel, Pixel XL, Pixel 2 and Pixel 2 XL; Samsung’s Galaxy S8, S8+, Note8, S7 and S7 edge; LGE’s V30 and V30+ (Android O only); ASUS’s Zenfone AR; and OnePlus’s OnePlus 5.

With Lens, it is interesting to watch Google develop what is essentially a killer AR app several years after the introduction of its Google Glass headset. It is easy to imagine Google Lens in an AR headset becoming a must-have feature akin to Google Maps. Such a service could offer its wearer all sorts of useful information about the world around them. Google is still selling Glass as an enterprise-focused device, and Google Assistant powered by Lens could superpower it. We’ve asked Google if they plan to do that. Even if Google isn’t doing that, the company is known for providing foundational services that work across all devices (Search, Gmail, YouTube, Maps) and putting Lens in AR headsets from other companies makes a lot of sense as Google’s long-term ambition.

For the time being, though, Lens is sure to get lots of use from people using Google Photos and Google Assistant.