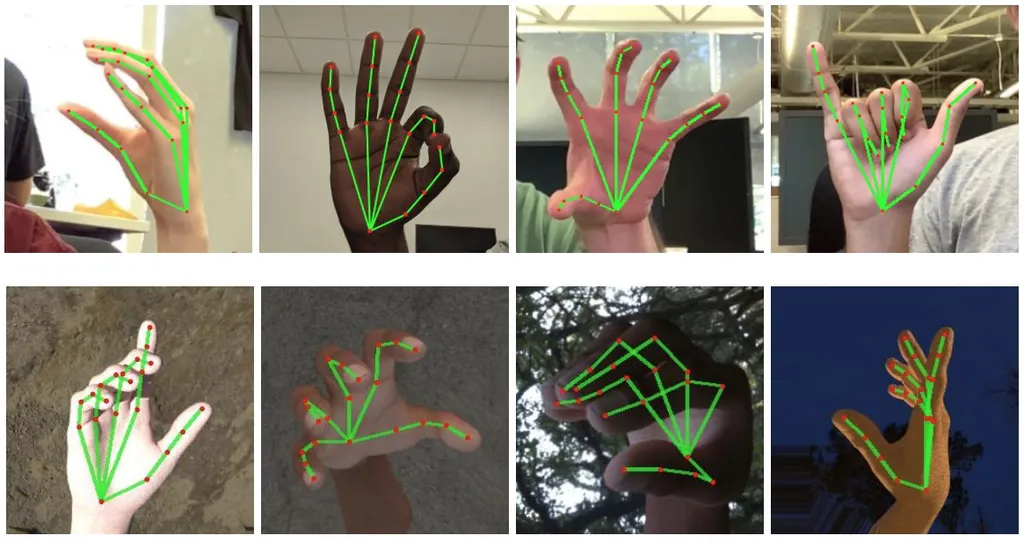

Google released an open source algorithm which performs real-time 21-point finger tracking on mobile hardware.

The system is part of Google’s MediaPipe, a modular framework for machine learning based solutions such as face detection, object detection, and hair segmentation.

When people put on a VR headset, one of the first things they do is reach out with their hands. Tracked controllers offer a basic representation of our hands. They’re also very suited for gaming. But they don’t track the vast majority of finger motion and the very act of holding them restricts those motions too.

In non-interactive VR experiences and social VR, controllers often feel more like a chore than a help. If we could enter these experiences by just putting on a headset and seeing our real hands, this reduction of friction would be a welcome improvement.

Unfortunately, Google’s blog post doesn’t mention the quality and latency of the current implementation. It also doesn’t mention VR, though Google is known to be researching virtual reality technologies. It does, however, mention that the company plans in the future to “extend this technology with more robust and stable tracking”.

Google doesn’t currently have plans to release an Oculus Quest competitor. In fact, the company’s commitment to VR at all has come under question this year with no mention of VR at IO 2019. But if this changes in the future, such technology could allow for a standalone headset with natural input interactions.

Facebook, the company behind the Oculus brand, is also known to be researching camera-based finger tracking. However, the company has not released any implementation to the public.