Lemnis Technologies demonstrated a series of prototype optical designs at CES 2019. Though none were in a consumer-ready state, each showed promising previews of features which might make it into future VR headsets.

We tried a total of three headsets at CES 2019 from Lemnis Technologies. The first “Verifocal VR Kit” showed moving “Alvarez” lenses which were audible inside the headset’s housing as they adjusted to eye movements. Combined with eye-tracking and software, this headset is meant to alleviate the vergence-accommodation conflict allowing for clearer visuals of close objects as well as more comfortable long-term use of a VR headset. It is said to have a focus range of 4.5 diopters, so it can “can adjust focus from 22 cm to optical infinity.”

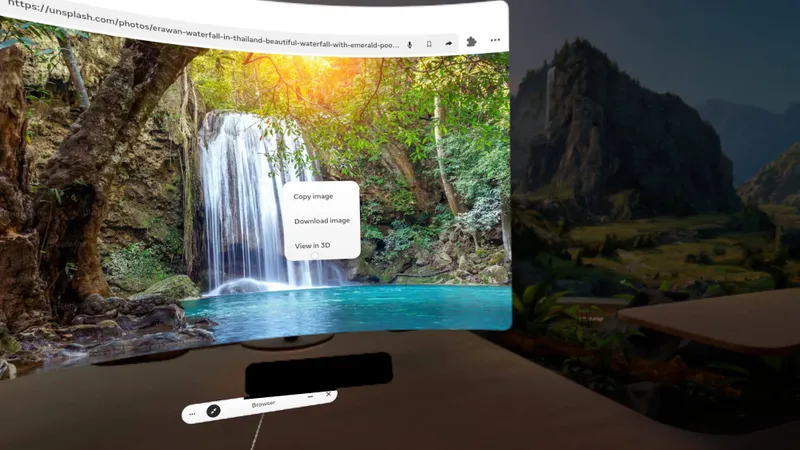

A second “R&D prototype” headset with a much smaller field of view showed silent operation by way of “liquid lenses.” We have photographs of both these systems, with the liquid lens headset below. The “small FOV is not a limitation of the platform, it is only because these specific lenses are publicly available off-the-shelves,” Pierre-Yves Laffont, co-founder and CEO of Lemnis, explained in an email.

A third headset — still in development — was shown once we agreed not to take pictures. This final headset is “based on actuated screens” and, again with eye tracking, demonstrated an approach meant to solve the vergence-accommodation conflict while featuring a focus range of “8 diopters”, or from “12.5 centimeters to infinity.”

“Usually you don’t need to focus that close, so this range is mostly useful for myopia correction,” Laffont wrote.

Tuning Headsets For Human Vision

This third design demonstrated pretty compelling AR pass-through. The sense of depth seen in the world around me appeared spot on as I moved my hand through a floating solar system that was inserted into my view of the real world. I also touched objects in the real world shown where I expected them to be.

The overall design could be a hint at the sorts of benefits which might be to come in future headsets built for more comfortable long-term use. Last year, Facebook Reality Lab revealed the Half Dome varifocal prototype based around a similar premise of eye tracking and moving panels.

Lemnis is seeking partners to license their “software platform and hardware designs.”

For those unfamiliar, in current consumer headset designs the lenses of the headset itself focus your eyes comfortably at a distance and represent all digital content at that “fixed focus” distance. In the real world, when you focus on objects up close your eyes turn toward one another a slight bit and the lenses of the eyeball change shape to adjust focus. The vergence-accommodation conflict arises because, with a fixed focus design, your eyes don’t adjust as they do in the real world to content represented at various depths.

Precision eye tracking could be useful in addressing this limitation. One potential way this could help is by moving the displays or other optical elements on the headset to follow eye movement and adjust focal depth. This would provide the clearest visuals directly in front of the eyeballs. Faces come in so many shapes and sizes that eye tracking could also be critical in tuning a headset’s fit and focus for every user. This includes accounting for the prescription that wearers who need glasses require. For UploadVR editor Kyle Riesenbeck, who wears corrective lenses that are different for each eye, it was a big deal to see through a headset which could adjust to account for that prescription without his glasses.

A touch of a button on the computer powering the headset turned the view from AR to VR. Lemnis researchers built their own eye tracking solution. In one demo I placed the floating solar system in between myself and Kyle and followed the planets making their revolution around the sun while listening to the optics of the headset straining to keep up with the tracking movement of my eyes. Again, this was “a very early prototype that is still under development.”

When it worked, though, the planets even just a few inches from my eyes appeared to be extremely sharp. With the AR pass through mode I held Lemnis’ marketing brochure up close and watched as the focus changed to show the text more clearly. Other times focus would pop in or improve only after my eyeball was already pointed in a new direction. This is being addressed, Laffont wrote, and may have been due to the headset slipping out of place on my face and causing the eye tracking to become inaccurate. In a separate demo earlier, Lemnis eye tracking was used to successfully select virtual objects and move them.

There’s a lot of work to be done with these concepts to get them ready for consumers but we look forward to seeing future demos from Lemnis, and we hope Facebook shows Half Dome this year so we can do a comparison.