“Just look at that box there and pull the trigger,” says Christian Villwock SensoMotoric Instruments’ director of OEM technology. I do, and watch an explosion send the box flying high into the sky. I track it with my head and eyes, watching it become smaller and smaller as it goes ever higher. Feeling like I have my gaze locked on it, I pull the trigger again sending it so high the speck disappears from my view.

“Damn, that was a nice shot.”

SensoMotoric Instruments, SMI, committed itself to solving one of the most vital issues for the second generation of VR, eyetracking that is accurate, fast, and cheap – and they have accomplished that goal with flying colors. SMI didn’t get here alone, their new 250Hz eye tracking system is the result of a partnership with camera sensor manufacturer Omnivision who provided the camera hardware for the new system.

https://www.youtube.com/watch?v=Qq09BTmjzRs

SMI’s eye tracking works by combining a ring of infrared lights that surround the edge of each of the lenses. The IR lights are invisible to the human eye but are picked up by each of the two Omnivision 250Hz camera sensors inside the headset, which use SMI’s proprietary eye tracking SDK to tell the computer exactly where the user is looking. All of this information is processed by the hardware and software in under 2ms, allowing for fluid and most importantly accurate eye tracking.

“Getting over the 240Hz mark was important,” says Villwock, “it allows us to track the saccadic motion of the eye.” Saccadic motion being the unnoticed and involuntary motion of your eye as it moves between planes of focus.

Palmer Luckey suggested on reddit that SMI may have been using auto aiming with their box demo. I made sure to ask the team whether this was the case and they responded with a sharp, “no.”

While shooting boxes and having avatars respond to your glance are fun demonstrations of the technology, the star of the show was a demonstration of foveated rendering.

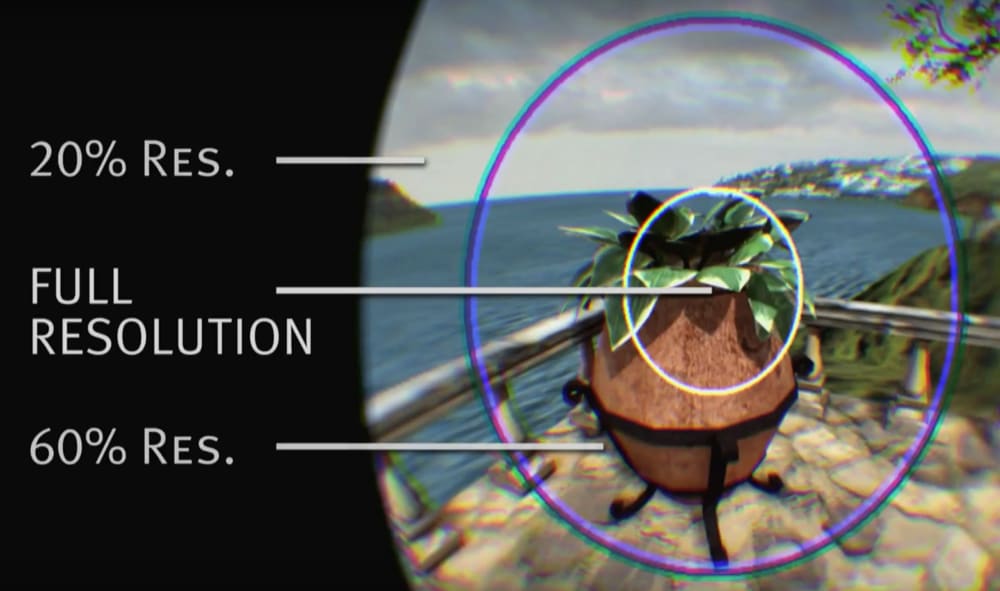

Foveated rendering is a rendering technique that takes advantage of the way your actual vision works, and when done properly it can unlock a whole new level of resolution using today’s graphics processing technology.

When you look at an object, only part of it appears fully clear to your eyes, about 10-20% of your vision directly at the center while getting progressively less focused going out towards your peripheral vision. Using eye tracking combined with foveated rendering you are able to only render the portion of the scene at the center of where you are looking in full resolution, but the trick is that it has to be so fast that the user doesn’t notice it, or else there will be a bunch of blurry areas clouding your view and catching up to where you are actually looking.

That wasn’t the case with SMI’s demo.

“The problem with this demo is that if you can’t see it, that means it probably is working,” says Villwock and it is very true because it is mimicking your vision so accurately the effect blends seemlessly with the scene. In the headset I couldn’t tell the difference between when the effect was on and when it was off, which is the exact point. Outside of the headset watching someone else go through the demo you can clearly see the differences in resolution.

With properly working foveated rendering, SMI can achieve a graphical performance boost of “a factor of two to four,” right now with the possibility for “even higher factors of improvement.” These are the kind of steps necessary to bring us to matching the resolution of the real world, which Oculus’ chief scientist, Michael Abrash, says will take 8K per eye – something out of reach with current consumer technology.

SMI is not a foveated rendering company, however, as this demo was mainly to showcase that it was possible with their latest hardware and SDK. Ultimately, foveated rendering is something that will be left to the graphics card makers like Nvidia and AMD using, SMI hopes, their hardware and software.

The question then turns to why, if eye tracking technology appears to be ready and is such a vital piece, is it being left out of first generation of hardware? The main reason may come down to one of the biggest unknowns, the size of the current market.

Right now, SMI’s integration into current VR hardware requires a bit of handcrafting, it has to be hacked into the headsets. Then there are economies of scale. At small scale these parts, the software that drives them, and the labor to integrate them manually are too expensive for some sort of consumer upgrade driving the price upwards of $12,000 or more. That takes it wildly out of the range of the average consumer, but what happens when we get to a production scale of 1 million units?

“The total cost of all the hardware at scale is in the single digits,” says Villwock, “a few dollars.”

That means that it would be a nominal cost for the benefits it would bring to add eye tracking in the second generation of headsets, something Villwock is certain will happen. With the initial size of the market being unknown, and the cost of the Oculus Rift already causing sticker shock, eye tracking has been relegated to a second generation of VR technology. Which is fine, because it gives it even more time to mature and become as perfect as possible before hitting consumer devices, something Villwock is certain will happen:

“My personal belief,” he says, “is that all version two headsets will have eye tracking integrated.”

With this successful demonstration, all eyes are on SMI to make that prediction a reality.