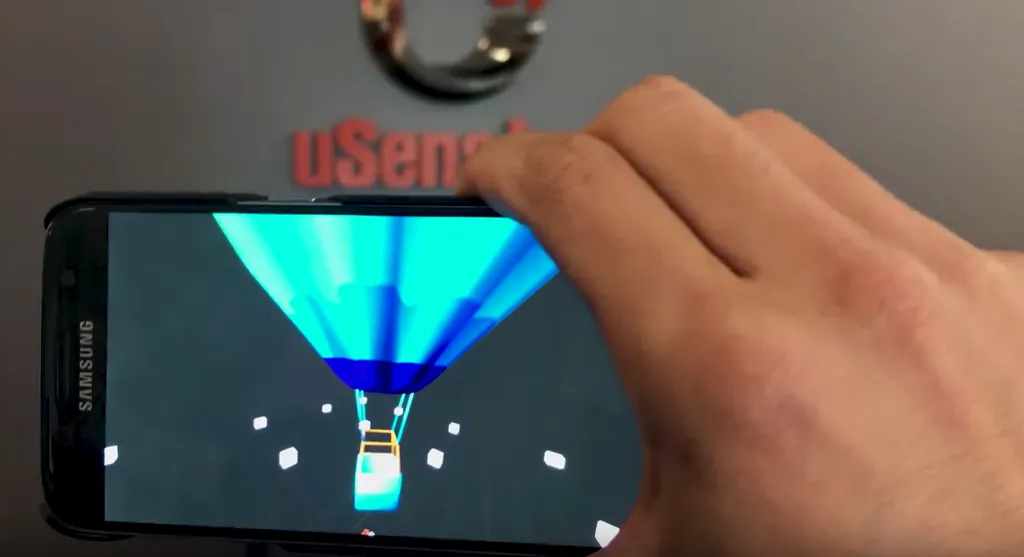

It’s been nearly a year since we’ve heard from uSens. Back then, we wrote about the company’s new mobile SDK, offering positional tracking for smartphone-based headsets as well as hand-tracked input. Today, the company is announcing a new technology that offers better inside-out mobile positional tracking using simultaneous localization and mapping (SLAM) technology.

Using a camera fitted to a smartphone, uSens’ new SLAM algorithm is able to create a virtual map of the environment around the user and then continue to accurately reflect the phone’s real-world position within that environment. Move forwards, and your phone will continue to scan the environment, registering the change and moving the virtual image in relation. The result is true six degrees of freedom (6DOF) tracking. You can see it in action in this video below.

uSens last year raised $20 million to work on this technology, also announcing its Fingo series of sensors that provided 6DOF tracking and 26DOF hand-tracking. The company was only able to show its tracking on PC-based headsets, though. Now, the company is just using smartphone cameras, freeing up its algorithm and providing increased tracking speed and lower power consumption.

“With SLAM, detecting and tracking surfaces is also fundamental,” Yue Fei, uSens Co-founder and CTO told me over email. “So, if you are tracking an object on a table, you are not just tracking yourself or a body part, but everything in the camera’s field of view. Only then can you get a reference point for your position in the virtual/augmented world.”

We’ve seen other solutions of late that promise positional tracking with just your smartphone. Apple’s ARKit might not be intended for VR, but people are already using it to experiment in this area, for example, while Google Tango uses dedicated tech to similar effect. But how does uSens plan to compete with growing first-party solutions?

“The secret sauce is always in the algorithm and we have received great feedback on our SLAM performance and every day, our algorithm is improving,” Fei said, noting that Tango’s tech was a very different approach and ARKit has seperate goals. “A big differentiator for us is that our solution supports both SLAM and 3D hand tracking at the same time. We are announcing that we offer SLAM solutions combined with our hand tracking to add another layer to immersive ARVR solutions.”

Fei also told me that last year’s Fingo line had seen a “steady quarter-on-quarter increase” in sales and usage, though declined to offer official figures. He did reveal, however, that the company has a “major upgrade” to both the hardware and software coming later this year. The new SLAM SDK, meanwhile, will be rolling out within the next two to three months. The tech will be on display at Siggraph in early August.