The best virtual reality news at NAB was tucked away in Immersive Media‘s booth. That’s where Tim Dashwood was showing a preview of StereoVR Toolbox, a series of plugins for working with virtual reality in Final Cut Pro, Motion, Premiere, and After Effects (Mac only, by the way). The plugins are pretty awesome, and in retrospect are sort of obvious and necessary.

Basically, the plugins let you use an Oculus headset as a director’s monitor, updating live as you cut or composite your live action VR footage (or pre-rendered CG footage). Before StereoVR Toolbox, your only option was to cut blindly, cross your fingers, render, load into the headset, and hope you got it right. It’s the virtual reality equivalent of splicing negative with tape, minus the nostalgia or retro hipsterness (depending on your age). It’s an inhumane way to work. But StereoVR Toolbox makes that whole process realtime, sending preview frames out directly to the headset, complete with orientation tracking and mapping. It’s almost magical, and it makes me wonder how anyone cut VR before this without going insane.

Dashwood was demoing his plugins with the Oculus DK1, but it supports the DK2 and he intends to support other HMDs in the future. “My goal is not to only do this for Oculus Rift, I’m just calling them HMDs because who knows? I want to get to point where I create an iOS app, someone has a Google cardboard, and I’m just sending a signal through Bluetooth to an iPhone. And that’s pretty easy to do with the way OS X is set up.”

I’m glad it’s easy for you, Tim. Here’s the plugin:

And if you think cutting without this plugin is trivial — ‘I can figure out what’s going on in that crazy, funhouse mirror footage,’ you say — well, you’re either very special or very wrong. I spoke with an editor at NAB who was hired by a high-end VR production shop solely for his experience with IMAX dome footage. The company figured his experience cutting distorted footage would allow him to pre-viz the footage on the fly. That’s pretty special. (The plugins are easier.)

“You can hit play, I can have a director with the head mounted display on, looking around and seeing what’s going on in the scene,” explained Dashwood. The plugin “allows us to make creative and aesthetic decisions … but also just stuff like dissolves and transitions, color correction … all the things you could do in 2D, you can do in VR now.” (Of course, ‘now’ is relative — you just gotta get Tim to let you into the beta program. Mere mortals will have to wait a few weeks or months.)

So now you can cut and see the effects of your cut instantly. Note that Dashwood calls the Oculus the “director’s monitor,” which suggests you shouldn’t wear the headset while actually cutting (unless you’re a quick-key ninja). Personally, I don’t usually have a director looking over my shoulder — I expect to throw a headset on after making an edit decision, then checking out the result myself. It’s not quite as seamless as the director-editor pairing but I think it will help build intuition for editing VR, and it’s a lot better and faster than the cut-render-load-watch carousel. (Maybe I’ll let the director sit in for the fine cut.)

But there’s more than just preview; the Toolbox has some VR-specific functions built in. The big one is mapping; if you’ve got a single file with your VR footage in it, the StereoVR Toolbox can map it to the correct projection in one GPU-accelerated go. Note that this is not a full-fledged stitching solution, it’s just meant for pre-stitched content with a different mapping, or footage from a panoramic camera. So the plugins are prosumer-ready right out of the gates — high end professional productions presumably have their own stitching thing going on, beautiful little snowflakes that they are. (StereoVR Toolbox can also take the output of standard stitching tools like Kolor or Videostitch, obviously.)

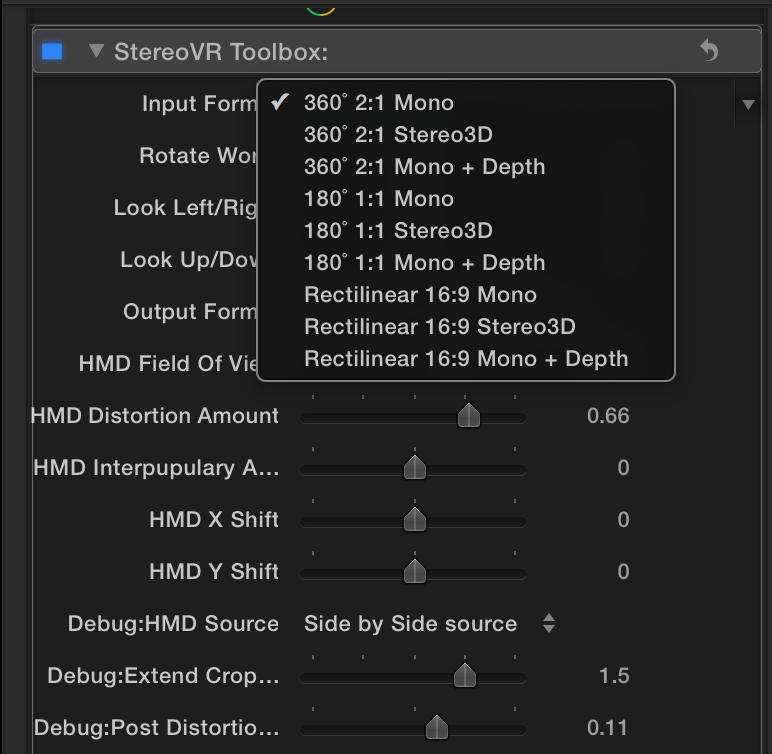

You can choose all your input parameters, too: whether you’re shooting 360 or 180, 2D or 3D, etc, the plugin supports it.

Another feature that’s obvious once you see it: panning. In case your camera array wasn’t pointed directly at the subject you wanted, you can ‘pan’ the virtual footage. You can make small nudges to tighten up the alignment, or huge movements to shift the camera 180 degrees. It’s not just another way to move creative decisions out of the cinematographer’s hands and into post, though: the way we think about composition in VR is totally different than 2D or stereo 3D, because the viewers can be looking in a wider field of view, and sometimes you don’t know where the weight of the shot will be until you cut it. Dashwood used some 360 footage to demonstrate the issue: you expect viewers to be looking at a fearsome male lion who’s walking toward the camera before the cut, but the footage on the other side of the transition is just background. Using the pan function, you can shift the second shot so the scene cuts from the lion to a water buffalo.

“You don’t want your users looking at, say, the wall after a transition,” Dashwood points out. Unless, you know, you’re into that. In fact, let me make a possibly stupid prediction: these tools are going to encourage more radical cutting in the future. Today’s VR footage tends to feature long takes and few cuts, for fear of sickening the user, and maximizing our sense of ‘presence’. With precision tools and live update though, editors can break new ground in VR experiences. For example, we’ll be able to make ourselves sick hundreds of times faster, so we can precisely tune in the viewer’s nausea. At the show, we hacked Dashwood’s pan function to create a new ‘Vomitron’ effect: we set a couple of keyframes and sent the user spinning wildly. It, um, worked as expected. (Don’t try this at home. Dashwood quickly deleted the keyframes.)

And as I constantly remind everyone who will listen, live action VR is hard, and 360 3D live action VR is as easy as violating physics. But the StereoVR Toolbox has a cool function carried over from Dashwood’s 3D toolbox plugin (yes, like much of VR, this project has its roots in Stereo 3D): depth-mapping. By supplying a grayscale map, you can ‘bump’ footage right or left to create synthetic 3D. This relieves some of the physics-breaking necessary for super-wide-angle stereo 3D, and it might help rescue or supplement stereo rigs. And it’s another cool knob we can spin and and play with. As far as Dashwood can tell, and I think I can corroborate, no other packages are doing this yet. (Full disclosure: we didn’t have time to play with it at the show, so I have no idea how it stacks up to true stereo 3D, but it sounds cool, doesn’t it? Dashwood has been working on this bump-mapping for years in his regular 3D suite, so it’s literally the most mature part of the plugin.)

“360 3D live action VR is as easy as violating physics”

Finally, a little love for the motion graphics folks. The StereoVR Toolbox supports Apple Motion and Adobe After Effects for motion graphics. The effect is identical to cutting on an FCP or Premiere timeline, so it’s seamless but some fancy stuff may require a precomp. And the headset displays in realtime even if the timeline doesn’t, so if your ancient mac pro tower is spitting out a measly 2 frames per second, you can look around each frame with no latency while the next frame is served up. In other words, you don’t have to pre-render to preview your work, which is pretty important.

Get it While It’s Hot

So, that’s how it works. As I mentioned earlier, it only works on a Mac, because it’s built on Quartz composer. It really wants a dedicated GPU, not one of those all-in-one mobile chips. So probably no cutting on your Macbook Air, I’m afraid. But because it hits the GPU, it’s surprisingly CPU-friendly. Tim’s Macbook Pro chugged along just fine for the demos, which is promising.

Dashwood says the price is projected to be $1299, but early adopters will be able to get it half off. You can sign up for the beta at Dashwood3D.

And a Shameless Plug … for the kids

On a literally related note, Dashwood’s kids are already trying their hands at authoring plugins. The Star Wars superfans have authored a couple plugins for animating blasters and light sabers, you can check them out at FanFilmFX. Because who knows? Support them now, and maybe they’ll be authoring the neural-implant editing plugins we’re dying for at NAB 2046.